Marketing analysts face a measurement crisis: 73% of customers interact with multiple touchpoints before purchase, yet privacy regulations, walled gardens, and cookie deprecation now obscure 42–65% of customer journeys. Attribution model biases cause up to 26% wasted marketing budgets by systematically undervaluing upper-funnel channels.

Key Takeaways

• 73% of customers interact with multiple touchpoints before purchase, yet attribution model biases waste up to 26% of marketing budgets.

• Last-touch attribution systematically undervalues upper-funnel channels like content marketing and social media across all business models.

• Attribution model selection requires evaluating user tracking capability, conversion path length, data volume, and sales cycle—not applying one model universally.

• Data-driven algorithmic models require >10,000 conversions/month and dedicated data science resources; otherwise rule-based models like time-decay or position-based are sufficient.

• Position-based 40/20/40 attribution fits B2B SaaS and balanced journeys with 4–8 touchpoints without requiring massive data volume or ML expertise.

This guide provides actionable strategies for implementing cross-channel attribution in 2026's fragmented measurement landscape. It covers attribution model selection frameworks, diagnostic tools to detect when models are lying, and tool comparisons for SegmentStream, Northbeam, AdBeacon, Rockerbox, LeadsRX, Triple Whale, and Ruler Analytics.

With the right approach, you can assign accurate conversion credit across touchpoints, optimize every marketing dollar, and avoid the hidden costs that derail most attribution implementations.

What Is Cross-Channel Attribution?

This approach answers critical measurement questions that single-channel analysis cannot:

• Which touchpoints deserve credit for this conversion?

• How should credit be distributed across a 7-touch journey spanning 45 days?

• Is your last-click model systematically undervaluing upper-funnel channels like content marketing and social media?

• When do assisted conversions outnumber last-click conversions by enough to justify switching attribution models?

The core challenge is defining an attribution window—the time period during which touchpoints are eligible to receive credit. A 7-day window captures only immediate conversions, while a 90-day window includes upper-funnel research touches but risks attributing conversions to irrelevant interactions.

Cross-channel attribution differs fundamentally from cross-channel marketing strategy. Marketing strategy addresses how channels work together to move customers through the funnel. Attribution addresses how to measure each channel's contribution to conversions. Conflating these creates measurement frameworks that can't answer the questions analysts actually need to solve.

Attribution Model Types and Selection Framework

Attribution models define how conversion credit is distributed across touchpoints. The right model depends on your conversion path length, data volume, sales cycle, and business model. Using the wrong model creates systematic biases that misallocate budgets.

Core Attribution Model Types

| Attribution Model | Credit Assignment | Best Use Case | Key Bias |

|---|---|---|---|

| Last-Touch | 100% to final touchpoint | Short sales cycles (<7 days), single dominant channel, conversion paths <3 touchpoints for 80%+ of conversions | Systematically undervalues all awareness and consideration touches; over-credits branded search and direct traffic |

| First-Touch | 100% to initial touchpoint | Awareness campaigns, top-of-funnel optimization, measuring new channel launches | Ignores all nurturing and conversion touches; over-credits display and content marketing |

| Linear | Equal credit to all touchpoints | Brand-building, awareness campaigns, journeys where all touches contribute equally | Treats critical conversion touch same as passive impression; dilutes signal in long paths (10+ touches) |

| Time-Decay | Exponentially more to recent touches | Sales cycles >30 days, avg path length >7 touchpoints, direct-response focus | Under-credits early research; over-values retargeting and remarketing |

| Position-Based (U-Shaped) | 40% first, 40% last, 20% middle | Balanced journeys, B2B SaaS with defined awareness and decision stages, 4–8 touchpoints typical | Assumes middle touches are less valuable; doesn't adapt to journey variations |

| Data-Driven / Algorithmic | ML-based on conversion path patterns | >1,000 conversions/month, >10,000 conversion paths, high-volume ecommerce | Black box opacity; requires massive data to avoid overfitting; learns platform biases if trained on platform data |

Attribution Model Selection Decision Tree

Use this diagnostic framework to choose the right model based on your data characteristics and business context:

Attribution Model vs. Business Model Fit

Not all attribution models fit all business models. This matrix shows model suitability by business type:

| Business Model | Last-Touch | Linear | Time-Decay | Position-Based | Algorithmic |

|---|---|---|---|---|---|

| B2C Ecommerce | Fair — works for impulse buys, short paths | Good — balanced for brand-building | Excellent — values retargeting appropriately | Good — if 5–8 touchpoints typical | Excellent — high volume supports ML |

| B2B SaaS | Poor — ignores 12+ research touches | Poor — over-credits early research, under-credits demo/trial | Fair — misses dark periods during internal approval | Excellent — 40/20/40 fits awareness → trial → close | Good — if >1,000 deals/month |

| Marketplace | Good — short decision cycles | Fair — treats search and browse equally | Good — values recent search intent | Fair — middle touches often passive browsing | Excellent — high volume, clear conversion patterns |

| Mobile App | Excellent — install = conversion, short path | Fair — dilutes signal in 2–3 touch paths | Good — emphasizes final install driver | Fair — overkill for short paths | Good — if measuring in-app events, not just installs |

| Retail + Online | Poor — misses online research → store visit | Good — values all online and offline touches | Good — emphasizes recent store visit drivers | Excellent — awareness online, close in-store | Fair — requires store visit tracking, complex |

| Lead Gen | Fair — if form fill = immediate goal | Good — values nurture touches equally | Excellent — emphasizes late-stage conversion drivers | Good — if clear awareness → consideration → decision | Fair — lead quality varies, model may optimize for bad leads |

Example: B2B SaaS with position-based attribution is rated "Excellent" because SaaS buyers research intensively (avg 12 touchpoints over 45–90 days), then go dark during internal approval processes. Linear attribution over-credits early research touches and under-credits the late-stage demo or free trial that actually closed the deal. Position-based (40% first touch, 20% middle, 40% last touch) better reflects the awareness → nurture → close pattern while avoiding the black-box complexity of algorithmic models that most B2B teams lack the data volume to support.

Cross-Channel Attribution Tools Ranked: 2026 Comparison

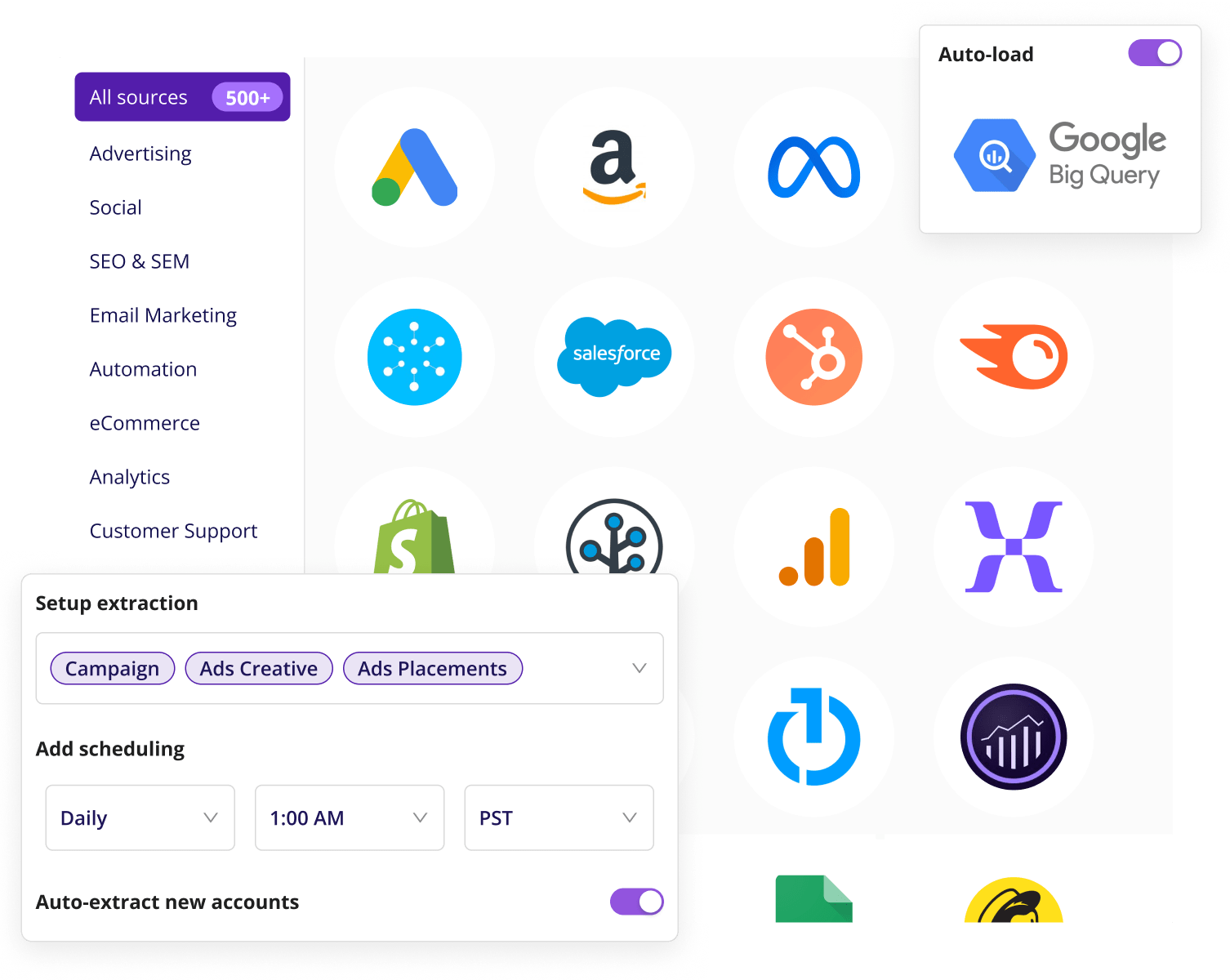

The top cross-channel attribution tools in 2026 are SegmentStream, AdBeacon, Northbeam, Rockerbox, LeadsRX, Triple Whale, and Ruler Analytics. These tools excel in synthesizing data across digital, offline, and CRM channels, with significant differences in attribution methodology, data volume requirements, and ideal customer profile.

| Tool | Best For | Attribution Models | Starting Price | Offline Support | Setup Complexity |

|---|---|---|---|---|---|

| Improvado | Enterprise teams managing 1,000+ connectors, real-time dashboards, AI-driven insights | All rule-based + custom algorithmic via Marketing Cloud Data Model (MCDM) | Custom pricing | Yes — CRM, call tracking, store visit data | Low — dedicated CSM, professional services included, operational within days |

| SegmentStream | Privacy-first attribution, conversion modeling, cross-device identity resolution | Automated cross-channel ROAS, visit scoring, algorithmic MTA, incrementality | Custom (enterprise) | Yes — full-funnel including offline data | Medium — expert implementation included |

| AdBeacon | Real-time actionable insights, creative-level analytics | Hybrid MTA with ML pattern identification, incrementality testing | Not disclosed | Limited | Low — fast deployment |

| Northbeam | Ecommerce brands spending $500K+ monthly on ads, Shopify/BigCommerce | MTA + MMM hybrid, server-side tracking resists iOS privacy changes | $2,000/mo | Limited — digital focus | Medium — requires ad spend scale |

| Rockerbox | Omni-channel enterprise, simultaneous model comparison, multi-market setups | First-touch, linear, time-decay, algorithmic, incrementality holdouts, MMM | $2,000/mo | Yes — broad digital + offline | High — longer setup, often needs analyst support |

| LeadsRX | B2B marketing and data teams, account-based journey mapping, CRM revenue tying | First-touch, last-touch, linear, W-shaped, custom rules | Not disclosed | Yes — full-funnel accounts + offline | Medium — B2B pipeline complexity |

| Triple Whale | Shopify profit tracking, creative tracking, real-time reporting | MTA for paid/owned channels, funnel analysis, revenue attribution | $129/mo | No — Shopify-native only | Low — plug-and-play for Shopify |

| Ruler Analytics | B2B closed-loop attribution, CRM pipeline and revenue tying | Full journey attribution linked to CRM deals and revenue | Not disclosed | Yes — CRM + call tracking | Medium — CRM integration setup |

| Google Analytics 4 | Free baseline attribution, small teams with limited budgets | Data-driven (basic), last-click, first-click, linear, time-decay, position-based | Free | Limited — requires manual imports | Low — free but feature-limited |

Tool Selection Guidance by Use Case

• B2B Marketing and Data Teams: LeadsRX and Ruler Analytics lead for B2B. LeadsRX provides visual account-based journey mapping showing all stakeholder touches (critical for 6.8-person buying committees), full-funnel attribution from first touch to closed revenue, and real-time cross-channel insights that tie marketing activity to pipeline and revenue. Ruler Analytics excels in closed-loop CRM attribution, linking marketing touches to specific deals and revenue amounts for CFO-level reporting.

• Ecommerce/DTC Brands: Northbeam for brands spending $500K+ monthly (not annually) on paid media. Server-side tracking resists iOS ATT and cookie deprecation better than pixel-based competitors. Triple Whale is the entry-level option for Shopify stores starting at $129/month, offering profit dashboards and creative-level attribution but limited to Shopify ecosystem.

• Enterprise Omni-Channel: Improvado and Rockerbox. Improvado connects 1,000+ connectors including offline channels (CRM, call tracking, store visits), offers pre-built Marketing Cloud Data Model (MCDM) for faster setup, and includes AI Agent for conversational analytics. Limitation: requires custom pricing; smaller teams may find this prohibitive. Rockerbox handles multi-market setups and runs multiple attribution models simultaneously for comparison, but requires longer setup (often 60–90 days) and analyst support to interpret outputs.

• Privacy-First Strategies: SegmentStream leads in 2026 for first-party data strategies. Automated conversion modeling recovers 30–40% of lost touchpoints from iOS ATT and cookie deprecation. Self-reported attribution (QR codes, coupons) and visit scoring provide unbiased logic for complex journeys that black-box AI models miss.

Common Challenges in Cross-Channel Attribution and How to Solve Them

Attribution implementations fail predictably. Industry data shows 42–65% of customer journeys are now partially or fully unobservable due to privacy regulations, walled gardens, and cross-device gaps. Attribution model biases cause up to 26% wasted marketing budgets. These challenges persist despite AI advancements because platforms like Meta, Google, and Amazon use conflicting attribution rules (view-through vs. click-through conversions), creating unreliable customer journey data.

1. Data Silos and Integration Complexities

Your data lives in dozens of different platforms—Google Ads, Meta, LinkedIn, Salesforce, HubSpot, email providers, call tracking systems. Each has its own format, naming conventions, and attribution logic. Manually combining this data in spreadsheets is time-consuming, error-prone, and unsustainable.

Marketing teams managing 10+ channels spend an average of 12.4 hours per week reconciling data discrepancies across platforms. For 18% of teams, this workload exceeds 20 hours—more than 36% of a standard workweek. This reconciliation time sink delays reporting, prevents real-time optimization, and blocks analysts from higher-value work like insight generation and testing.

2. Tracking Gaps and Privacy-Driven Signal Loss

The same customer often shows up as multiple users. They might be "user123" on a laptop, "userabc" on a phone, and an email address in your CRM. Without linking these identities, you can't see their full customer journey.

Tracking users across devices and platforms is harder than ever in 2026. Cookie deprecation, privacy laws like GDPR and CCPA, Apple's iOS App Tracking Transparency (ATT), and walled garden platforms now obscure 42–65% of customer journeys. This fragmentation and lack of a universal identifier makes it difficult to understand how different marketing efforts contribute to conversions and long-term customer value.

Cross-device tracking gaps create 35% visibility blind spots. A customer might research on mobile, compare options on desktop, and convert on tablet—appearing as three separate users in your analytics. Attribution models then systematically undervalue mobile (which drives awareness but rarely converts) and over-credit desktop (which captures comparison searches but didn't initiate the journey).

3. Attribution Model Selection Errors

Using the wrong attribution model creates systematic biases that misallocate budgets. The three most common selection errors are:

• Using ML models with insufficient data: Data-driven and algorithmic models require >1,000 conversions per month and >10,000 conversion paths to produce reliable outputs. Below these thresholds, models overfit to noise and produce unstable results—top-3 channels change week-to-week, making optimization impossible.

• Mismatched attribution windows: A 7-day attribution window for a product with a 45-day sales cycle systematically undercounts upper-funnel channels. Conversely, a 90-day window for impulse purchases over-credits irrelevant touchpoints from months ago.

• Ignoring incrementality: Attribution measures correlation (which touchpoints were present), not causation (which touchpoints caused the conversion). A customer who sees your brand search ad would have converted anyway via organic search. Last-click attribution over-credits branded search by 40–60% in most accounts.

4. Lack of Real-Time Insights

By the time you've manually compiled your reports, the data is already outdated. Industry surveys show 88% of enterprise marketing teams lack real-time access to cross-channel performance data for strategic optimization decisions. You can't react quickly to changes in campaign performance or market trends. Opportunities are missed and problems escalate.

The underlying cause is manual data preparation. When data prep takes days, insights arrive too late to matter. A paid search campaign underperforming by 40% continues wasting budget for 3–5 days until the weekly report surfaces the problem.

5. Cross-Device and Multi-Touch Attribution Gaps

Customers interact with brands across an average of 6–8 touchpoints spanning multiple devices before converting. Attribution models that can't connect these fragmented interactions systematically overvalue easy-to-track channels (desktop conversions, last-click branded search) and undervalue hard-to-track channels (mobile research, social discovery, cross-device journeys).

This creates 35% visibility blind spots where critical awareness and consideration touches disappear from attribution data, leading to budget misallocation that systematically defunds upper-funnel channels.

When Cross-Channel Attribution Fails: 5 Implementation Disasters and Recovery Procedures

Attribution implementations fail predictably. Understanding these failure modes helps teams avoid expensive mistakes and recognize problems early enough to correct course.

Failure Case #1: Pixel Conflicts Breaking Tracking

• What happened: A mid-market ecommerce brand implemented Northbeam attribution on top of existing Google Analytics 4, Meta Pixel, and TikTok Pixel tracking. Within 48 hours, conversion tracking dropped 60% across all platforms. The attribution tool's JavaScript conflicted with existing pixels, causing race conditions where multiple pixels tried to fire simultaneously and blocked each other.

• Root cause: No tag audit before implementation. Team didn't map existing tracking infrastructure or test for conflicts in staging environment.

• Recovery procedure:

• Immediately roll back to previous tracking state to restore data collection

• Conduct tag audit using Google Tag Manager preview mode or browser dev tools to identify all active pixels

• Implement tag sequencing: fire attribution platform pixel first, then platform-native pixels 200ms later using GTM sequencing rules

• Test in staging environment for 7 days with parallel tracking validation before production deploy

• Monitor conversion counts daily for 14 days post-launch to catch drops within 24 hours

Prevention threshold: If you have >3 active tracking pixels, require 30-day parallel testing period. If conversion tracking drops >10% in first 72 hours, automatic rollback protocol.

Failure Case #2: Model Mismatch (ML on Insufficient Data)

• What happened: A B2B SaaS company with 300 conversions per month implemented data-driven attribution in Google Analytics 4. The model produced wildly unstable results—top-3 channels changed every week, attributed conversion values fluctuated 40%+ week-over-week, and credit allocation bore no resemblance to known customer journey patterns (e.g., organic search received 5% credit despite driving 40% of demo requests).

• Root cause: Insufficient data volume. Data-driven models require >1,000 conversions per month to produce stable outputs. With 300 conversions, the model overfitted to weekly noise rather than learning genuine patterns.

• Recovery procedure:

• Switch immediately to rule-based attribution (position-based 40/20/40 for B2B, time-decay for ecommerce)

• Continue collecting data for data-driven model without using it for decisions

• Run parallel models (rule-based for decisions, data-driven shadow mode) once you reach 1,000 conversions/month

• Validate model stability: if top-3 channels remain consistent for 8 consecutive weeks AND credit allocation changes <15% week-over-week, model is ready

• Transition to data-driven model only after 90-day validation period shows stability

Prevention threshold: Never use ML/data-driven models below 1,000 conversions/month. For B2B with long sales cycles, use deal count not lead count—if you have 500 leads but only 50 closed deals, you have 50 conversions for attribution purposes.

Failure Case #3: Ignoring Incrementality (Attribution ≠ Causation)

• What happened: A retail brand's multi-touch attribution showed branded paid search receiving 55% of conversion credit. Marketing team increased branded search budget by 40%. Revenue remained flat. Incrementality test (turning off branded search for 2 weeks in geo-matched test markets) revealed that 80% of branded search conversions would have happened anyway via organic search—the channel was not incremental.

• Root cause: Attribution measures correlation (which touchpoints were present) not causation (which touchpoints caused the conversion). Customers already searching for your brand name were going to convert regardless of whether they clicked a paid ad or organic result.

• Recovery procedure:

• Identify high-brand-intent channels (branded search, direct traffic, email to existing customers)

• Run incrementality tests: turn off channel in matched geo markets for 14–30 days

• Measure conversion lift in test markets vs. control markets

• Calculate true incremental contribution: if conversions drop 20% when channel is paused, that channel is 20% incremental (not the 55% attribution claimed)

• Reweight attribution model: apply incrementality multiplier to channel credits (branded search credit × 0.36 incrementality factor = true contribution)

Prevention threshold: Any channel receiving >30% of conversion credit should undergo incrementality testing before major budget increases. Run annual incrementality audits for all channels receiving >15% of budget.

Failure Case #4: Platform Lock-In and Migration Costs

• What happened: A DTC brand implemented a specialized attribution platform with custom data models and proprietary identity resolution. After 18 months, the platform's pricing increased 140% and the vendor refused to export historical attribution data in a usable format. Migration to a new platform meant losing 18 months of attribution history and rebuilding all custom reports.

• Root cause: No data portability requirements in vendor contract. Custom data models built in platform-specific formats without documentation.

• Recovery procedure:

• Negotiate data export before contract termination—include all raw event data, not just aggregated reports

• Run parallel tracking (old platform + new platform) for 90 days to recreate historical benchmarks in new system

• Document all custom attribution rules and conversion definitions to replicate in new platform

• Accept that some historical comparisons will be imperfect—focus on establishing reliable baseline in new platform

• Build attribution logic in vendor-neutral layer (your data warehouse) rather than inside proprietary platforms going forward

Prevention threshold: Before signing, require: (1) raw event-level data export in CSV/JSON, (2) documentation of all attribution algorithms, (3) 90-day parallel tracking allowance in contract. If vendor refuses, negotiate lower pricing or choose different vendor.

Failure Case #5: Privacy Compliance Gaps

• What happened: A health tech company implemented cross-device attribution using probabilistic device graphs (behavioral fingerprinting to link users across devices without login). GDPR audit revealed this violated "legitimate interest" provisions because users hadn't explicitly consented to cross-device tracking. Company faced potential fines and had to delete 14 months of cross-device attribution data.

• Root cause: Attribution vendor used probabilistic matching without explicit consent mechanism. Legal team not involved in vendor selection process.

• Recovery procedure:

• Immediately pause probabilistic cross-device tracking pending legal review

• Implement explicit consent for cross-device tracking in privacy banner

• Switch to deterministic-only identity resolution (email, login ID, phone) for users who consent

• Accept degraded cross-device visibility for non-consenting users—use aggregated measurement (MMM) instead

• Involve legal/privacy team in all attribution vendor selections going forward

Prevention threshold: For any healthcare, finance, or EU-serving company: require SOC 2 Type II, GDPR, CCPA, HIPAA certifications from attribution vendor. Require legal review of data processing addendum before pilot begins. If vendor can't provide certifications, eliminate from consideration regardless of feature set.

Hidden Costs of Cross-Channel Attribution Platforms

Published pricing for attribution platforms typically shows only the software subscription cost. Total cost of ownership includes software, implementation engineering time, ongoing data quality maintenance, data warehouse infrastructure, and opportunity cost of migration. These hidden costs often exceed software subscription by 2–4×.

| Attribution Approach | Obvious Costs | Hidden Costs | Break-Even Threshold |

|---|---|---|---|

| Google Analytics 4 (Free) | $0 software cost | 40–60 hours setup (event tracking, conversion mapping, custom reports), 5–8 hours/week ongoing report maintenance, limited to 9 attribution models with no offline data support, cross-device tracking requires Firebase SDK (20+ hours integration) | Justified when: ad spend <$20K/month, <5 paid channels, no offline conversions, team comfortable with GA4 UI limitations |

| Entry-Level SaaS (e.g., Triple Whale) | $129–$500/month subscription ($1,500–$6,000/year) | 20–40 hours setup (platform integration, conversion mapping), 2–4 hours/week QA (validating platform counts match ad platform reports), platform lock-in risk (limited data export), often requires supplemental tools for full-funnel view (adds $100–$300/month) | Justified when: ad spend $20K–$100K/month, Shopify or single-platform ecommerce, limited offline conversions, need faster insights than GA4 but not full customization |

| Mid-Market Platform (e.g., Northbeam, Rockerbox) | $2,000–$5,000/month subscription ($24K–$60K/year) | ~1 week setup (pixel deployment, data integration, custom model configuration), 6–10 hours/week ongoing QA and model tuning, data warehouse recommended for custom analysis (adds $200–$1,000/month), often need analyst support (0.25–0.5 FTE, $30K–$60K/year) | Justified when: ad spend >$100K/month, 5+ paid channels, offline conversions 10–30% of total, need server-side tracking for iOS privacy resilience, team has analytical resources to interpret outputs |

| Enterprise/Custom Attribution | $50K–$150K/year platform + services, or in-house build with data engineer salary ($120K–$180K/year) | 3–6 months data cleanup before models work correctly, ongoing data quality monitoring (0.5 FTE, $60K/year), model retraining quarterly (40 hours each), executive education on model limitations (often skipped, causes bad decisions), opportunity cost of 6–12 month implementation before ROI | Justified when: ad spend >$2M/year, need to shift $500K+ annually to ROI-positive channels, offline conversions >30% of total, multi-market operations, need custom data models not available in SaaS tools |

GA4 vs. Paid Platforms: When to Upgrade

Google Analytics 4 provides free attribution but has significant limitations. Use these upgrade triggers to determine when paid platforms are justified:

Cost-benefit example: A brand spending $100K/month on ads ($1.2M/year) considers upgrading from GA4 to Northbeam ($24K/year). If Northbeam's server-side tracking and creative-level attribution identifies that 15% of current spend ($180K) goes to underperforming audiences and creatives, reallocating that budget to better-performing segments at 2× ROAS improvement = $180K additional revenue. ROI on $24K investment = 750%. Upgrade justified.

Attribution Model Performance Benchmarks by Industry

Attribution models perform differently across industries due to varying customer journey complexity, touchpoint counts, and sales cycle lengths. Use these benchmarks to validate whether your attribution model is working correctly.

| Industry | Avg Touchpoints to Conversion | Best-Fit Attribution Model | Typical Credit Distribution (Brand vs. Non-Brand) | Model Accuracy Indicator |

|---|---|---|---|---|

| B2B SaaS | 8.3 touchpoints, 45–90 day sales cycle | Position-based (40/20/40) or time-decay for long cycles | 40% brand, 60% non-brand (if inverted, attribution window too short) | 55–65% of conversions should match top-5 journey paths; if lower, long-tail fragmentation needs custom model or journey paths are too complex for rule-based attribution |

| Ecommerce (DTC) | 5.2 touchpoints, 7–21 day consideration | Time-decay or data-driven (if >1,000 conversions/month) | 30% brand, 70% non-brand (heavy prospecting emphasis) | 60–70% of conversions match top-5 paths; retargeting should receive 25–40% credit in time-decay models |

| Lead Gen (B2B Services) | 6.7 touchpoints, 30–60 day cycle | Position-based or time-decay | 35% brand, 65% non-brand | 50–60% match top-5 paths; content marketing should receive 20–35% total credit in position-based models (if <10%, attribution window too short) |

| Retail (Omni-Channel) | 4.8 touchpoints (online) + 1.3 store visits | Position-based with store visit weighting | 45% brand, 55% non-brand (high brand awareness baseline) | Store visits should receive 30–50% credit for in-store conversions in position-based; if <20%, store visit tracking gaps present |

| Finance/Insurance | 9.1 touchpoints, 60–120 day cycle | Time-decay (long consideration) or custom rules | 50% brand, 50% non-brand (high-trust purchase, heavy brand research) | 45–55% match top-5 paths; content (comparison guides, calculators) should receive 25–40% total credit—if <15%, attribution model undervaluing research phase |

| Mobile Apps | 2.8 touchpoints to install, 4.2 to in-app purchase | Last-touch for installs, position-based for in-app revenue | 20% brand, 80% non-brand (heavy UA focus) | 70–80% of installs match top-3 paths; if measuring in-app purchase attribution, 50–60% match top-5 paths (longer consideration post-install) |

How to use these benchmarks: Compare your attribution outputs to industry benchmarks. If your B2B SaaS shows 70% brand credit and 30% non-brand credit (inverse of benchmark), your attribution window is likely too short—it's only capturing bottom-funnel branded searches and missing upper-funnel content and paid discovery. If your ecommerce brand shows only 40% of conversions matching top-5 journey paths (vs. 60–70% benchmark), either your customer journeys are unusually fragmented (possible) or your tracking has gaps causing data loss (more likely).

Attribution Edge Cases and Workarounds

Attribution models handle typical customer journeys well but fail predictably on edge cases. These scenarios require specialized measurement approaches that most attribution platforms don't support natively.

Edge Case #1: Dark Social (WhatsApp, Slack, Private Shares)

• Problem: Customers discover your content via private messaging apps (WhatsApp, Slack, iMessage) or private social groups. These appear as direct traffic in analytics because referrer data is stripped. Attribution models systematically under-credit social channels because high-performing content shared privately shows no social referrer.

• Detection method: Sudden spikes in direct traffic correlated with content publish dates suggest dark social sharing. If direct traffic increases 40%+ within 48 hours of publishing a blog post or launching a campaign, dark social is likely driving that traffic.

• Workaround:

• Add campaign parameters to all shareable content: ?utm_source=social&utm_medium=dark-social&utm_campaign=content-title

• Use URL shorteners (Bitly, TinyURL) with tracking for social shares—even when links are copied to private messages, shortener tracks as referrer

• Deploy "share with attribution" buttons that auto-append UTM parameters when users copy links

• Survey new customers: "How did you first hear about us?" with "Friend/colleague recommendation" as option—provides proxy metric for dark social influence

Edge Case #2: View-Through Attribution in Cross-Device World

• Problem: View-through attribution credits conversions to display/video ad impressions even when user didn't click. In single-device world, this was trackable via cookies. In cross-device 2026 reality with iOS ATT and cookie deprecation, view-through is unreliable—did the user who saw ad on mobile and converted on desktop really see the ad, or is the device graph probabilistically linking wrong users?

• When to trust view-through:

• Single household device fingerprinting (connected TV to mobile in same household) with 80%+ match confidence

• Deterministic cross-device (user logged into app on both devices)

• Short attribution window (1–7 days) where recency makes false positives less likely

When to ignore view-through:

• Probabilistic cross-device matching with <60% confidence scores

• Long attribution windows (30+ days) where temporal proximity is weak signal

• High-frequency display campaigns where most users saw 10+ impressions (view-through over-credits by assuming causation from correlation)

Workaround: Run incrementality tests. Pause display campaign in matched geo markets for 14 days. Measure conversion lift in active markets vs. paused markets. If conversions drop <10% when display is paused, view-through attribution is massively overstating impact. Reweight display credit by incrementality factor (e.g., if 10% incremental, multiply view-through credit by 0.10).

Edge Case #3: Offline + Online Attribution

• Problem: Customer sees digital ads, visits physical store, converts. Attribution model has no visibility into store visit because tracking breaks at offline boundary. Digital channels appear to drive zero conversions, leading to budget cuts for channels that actually drive offline revenue.

• Workaround using geo-experiments:

• Select matched market pairs (similar demographics, store density, historical sales patterns)

• Increase digital ad spend 40–60% in treatment markets, hold flat in control markets

• Run for 4–8 weeks to capture full sales cycle

• Measure store visit lift and revenue lift in treatment vs. control using store POS data

• Calculate digital channel contribution: (treatment market revenue - control market revenue) / (treatment market ad spend increase) = incremental ROAS

• Apply this ROAS to digital channel credit in attribution model as "offline halo multiplier"

Alternative workaround using store visit tracking: Platforms like Google Store Visits (requires Google Ads + location data opt-in) or Foursquare/Placed (mobile location SDKs) track users who saw ads and later visited stores. Accuracy depends on location permission adoption—if <30% of users enable location tracking, data is too sparse to be reliable.

Edge Case #4: Word-of-Mouth Attribution

• Problem: Customer hears about product from friend, colleague, or influencer mention (not paid sponsorship). This appears as direct traffic or branded search in attribution with no credit to channel that generated the word-of-mouth in the first place (often content marketing, product quality, customer experience).

• Workaround using promo codes: Assign unique promo codes to customer advocates, referral programs, or influencer partnerships. When new customer enters promo code, attribute conversion to referral source. Limitation: only captures conversions where promo code is used—many word-of-mouth conversions occur without code usage.

• Workaround using new customer surveys: Email survey to all new customers within 48 hours of first purchase: "How did you first hear about [brand]?" Options include: Search engine, Social media, Friend/colleague recommendation, Online review, Other. If >20% select "Friend/colleague," word-of-mouth is material channel that attribution is missing. Use survey data to create proxy metric for WOM influence even though you can't attribute individual conversions.

Edge Case #5: Brand Halo Effect vs. Direct Response

• Problem: Broad brand-building campaigns (TV, podcast sponsorships, content marketing) don't generate immediate measurable conversions but increase likelihood that all future marketing (search, social, email) performs better. Attribution models under-credit brand-building because it has no last-touch conversions, even though brand campaigns improve every other channel's performance.

• Detection method: Run correlation analysis between brand campaign spend and organic/direct traffic volume. If organic search traffic increases 15–30% during periods of high brand spend and decreases when brand spend pauses, brand campaigns are driving search behavior that gets mis-attributed to organic channel.

• Workaround using media mix modeling: MMM measures aggregate impact of all marketing channels on revenue using regression analysis. Unlike user-level attribution, MMM captures brand halo by correlating brand spend with overall revenue lift across all channels. Run MMM quarterly to quantify brand contribution that user-level attribution misses. Blend MMM outputs (top-down) with attribution data (bottom-up) for complete view.

Edge Case #6: Attribution in Privacy-First World (iOS ATT, Cookie Deprecation)

• Problem: iOS App Tracking Transparency and cookie deprecation obscure 42–65% of customer journeys. Attribution models that depend on deterministic user tracking produce systematically incomplete data.

• Workaround using aggregated measurement strategies:

• Media mix modeling (MMM): Top-down regression analysis correlating marketing spend with revenue. Doesn't require user-level tracking. Works when 40%+ of journeys are untrackable.

• Geo-experiments: Increase spend in test markets, measure revenue lift vs. control markets. Bypasses user tracking entirely—relies on aggregate market-level data.

• Conversion modeling: Platforms like SegmentStream use machine learning to estimate unobserved conversions based on partial data. Recovers 30–40% of lost touchpoints but introduces estimation error.

• Server-side tracking: Tools like Northbeam use first-party server-side tracking that's more resilient to iOS ATT and cookie restrictions than client-side pixels. Requires engineering resources to implement.

Decision framework: If >40% of your traffic comes from iOS devices OR >50% of traffic shows as "direct" (indicating referrer stripping), user-level attribution will have massive blind spots. Supplement with at least one aggregated measurement method (MMM or geo-experiments) to validate attribution outputs and catch systematic biases.

Key Metrics and KPIs for Cross-Channel Attribution

Attribution success requires tracking metrics that validate model accuracy and reveal optimization opportunities. These metrics fall into three tiers: foundational (track always), journey (track for multi-touch strategies), and advanced (track when optimizing for LTV and incrementality).

Foundational Attribution Metrics

• Attributed conversions by channel: Total conversions credited to each channel under your attribution model. Compare to last-click conversions—if multi-touch shows dramatically different channel rankings, your last-click model was systematically misallocating budget.

• Attributed revenue by channel: Revenue credited to each channel, weighted by attribution model. More accurate than attributed conversions for channels with varying AOV (e.g., email may have lower conversion count but higher AOV than paid social).

• Cost per attributed conversion: Channel spend ÷ attributed conversions. Compare to cost per last-click conversion to quantify how multi-touch attribution changes efficiency calculations.

• Return on ad spend (ROAS) by attribution model: Attributed revenue ÷ ad spend. Track ROAS under multiple models simultaneously (last-click, linear, time-decay, position-based) to understand sensitivity—if all models agree on top-3 channels, model choice matters less; if models disagree significantly, model selection is critical.

• Model stability: Track whether top-3 channels by attributed revenue remain consistent week-over-week. If rankings change frequently (>30% week-over-week variation), insufficient data volume or tracking gaps are causing noise.

Journey-Level Metrics

• Average touchpoints to conversion: Mean number of marketing interactions before conversion. If this increases over time (e.g., 5.2 touchpoints in Q1, 6.8 in Q4), customer journeys are getting more complex—last-click and first-touch models will become increasingly inaccurate.

• Conversion path length distribution: Histogram showing what percentage of conversions involve 1, 2, 3, 4, 5+ touchpoints. If >70% of conversions are 1–2 touchpoints, multi-touch attribution is overkill. If >60% are 5+ touchpoints, single-touch models are systematically wrong.

• Assisted conversions: Conversions where a channel appeared in the journey but wasn't the last touch. If a channel has 500 last-click conversions but 2,000 assisted conversions, it's 4× more valuable than last-click attribution suggests—strong signal that you need multi-touch model.

• Assisted conversion value: Revenue from conversions where channel assisted but didn't close. High assisted value + low last-click value = upper-funnel awareness channel being systematically undervalued.

• Time to conversion by first touch channel: Days between first marketing touch and conversion, segmented by initial channel. If content marketing first-touch has 45-day average vs. paid search first-touch 7-day average, these channels serve different funnel stages—attribution model must account for temporal differences.

Advanced Metrics (Incrementality and LTV)

• Incremental conversions: Conversions that wouldn't have happened without the channel (measured via holdout tests). Formula: (conversions in test group - conversions in control group) ÷ test group size. Use to correct attribution over-crediting—if branded search shows 500 attributed conversions but incrementality test reveals only 100 incremental, true value is 100.

• Customer lifetime value (LTV) by first/last touch channel: Track LTV of customers acquired through different channels. If social media first-touch customers have 60-day LTV of $800 vs. paid search first-touch $450, social delivers higher-quality customers even if initial conversion cost is higher—attribution model should weight accordingly.

• Cross-channel conversion rate: Percentage of users who interact with multiple channels and convert, vs. single-channel users. If multi-channel users convert at 8% vs. 2% for single-channel, this quantifies value of cross-channel exposure that attribution must capture.

• Attribution model ROI impact: Revenue change attributed to switching attribution models. Example: switching from last-click to position-based reallocates $50K/month from branded search to content marketing. If overall ROAS increases from 4.2× to 5.1× over next 90 days, model switch generated (5.1 - 4.2) × $50K × 3 months = $135K additional revenue. This metric justifies attribution platform investment.

Implementation Guide: Building Your Attribution System

Attribution implementation follows a three-phase process: foundation (data infrastructure), execution (model deployment), and optimization (continuous refinement). Most failures occur in foundation phase when teams skip data quality validation before deploying models.

Phase 1: Foundation (Weeks 1–4)

Step 1: Data source audit

• Map all marketing data sources (ad platforms, CRM, email, analytics, call tracking, offline sales)

• Document current tracking implementation: pixel types, UTM parameter conventions, conversion definitions

• Identify tracking gaps: which channels lack conversion tracking, where are cross-device blind spots, what percentage of journeys are unobservable

Step 2: Data quality baseline

• Pull 90 days of historical conversion data from all sources

• Calculate data quality metrics: what percentage of conversions have complete UTM parameters, how often do platform conversion counts match (Google Ads vs. GA4 vs. CRM), what percentage of users are trackable cross-device

• Establish quality thresholds: if <70% of conversions have complete source/medium/campaign data, fix tracking before deploying attribution model; if platform counts differ by >15%, reconcile discrepancies before trusting any attribution

Step 3: Choose attribution model based on diagnostic framework

• Use decision tree from earlier section: assess conversion volume, path length, sales cycle, data science resources

• Select 1 primary model for budget decisions + 1 comparison model for validation

• Document model parameters: attribution window (7, 14, 30, 90 days), conversion types included (all conversions, transaction revenue only, qualified leads only), channel grouping rules

Step 4: Platform selection and integration

• Based on use case (B2B vs. ecommerce vs. omni-channel), budget, and data volume, select platform from comparison table earlier

• If using SaaS tool: provision accounts, integrate data sources, configure conversion mapping

• If building in-house: set up data warehouse (BigQuery, Snowflake, Redshift), build ETL pipelines, implement attribution logic in SQL

• Run parallel tracking: keep existing reporting while attribution platform runs in shadow mode for 30 days to validate data accuracy

Phase 2: Execution (Weeks 5–12)

Step 5: Model validation

• Compare new attribution model outputs to last-click baseline: which channels gained/lost credit, by how much

• Run sanity checks: do top-3 channels remain stable week-over-week, do attributed conversion counts sum to actual total conversions (they should), does revenue attribution match actual revenue (within 5% tolerance)

• Validate against known patterns: if content marketing has 3,000 assisted conversions but receives near-zero credit, model is broken; if branded search receives 80% credit, model is over-crediting bottom-funnel

Step 6: Stakeholder education

• Present attribution model logic to marketing leadership: explain credit assignment rules, show example customer journeys with credit distribution, clarify what model captures and what it misses (e.g., doesn't measure incrementality, doesn't capture dark social)

• Create attribution FAQ document: answer "Why did channel X lose credit?," "How do we know the model is accurate?," "When should we reweight the model?"

• Set expectations: attribution model is directional, not precise; use for relative channel ranking and trend analysis, not absolute truth

Step 7: Gradual budget reallocation

• Don't immediately reallocate based on new model—make 10–15% budget shifts per month max

• Start with channels showing largest attribution discrepancies: if display advertising had 500 last-click conversions but 2,500 attributed conversions (5× multiplier), test 20% budget increase and measure ROAS impact

• Track performance for 60–90 days before next reallocation—gives model time to stabilize and validates that attribution-driven decisions improve actual outcomes

Phase 3: Optimization (Ongoing)

Step 8: Quarterly model review

• Re-run model selection diagnostic: has conversion volume increased enough to support algorithmic model, has average path length changed, have new channels launched

• Compare model outputs quarter-over-quarter: if top-3 channels changed significantly, investigate why—is customer behavior shifting, or is model picking up noise

• Adjust attribution window if needed: if sales cycle lengthens from 30 to 45 days, extend attribution window from 30 to 60 days

Step 9: Incrementality testing

• Run annual incrementality tests on channels receiving >15% of budget: turn off channel in matched geo markets for 14–30 days, measure conversion lift

• Calculate incrementality multiplier: (lift percentage) ÷ (attributed percentage). Example: channel shows 25% attribution credit but incrementality test shows 10% lift → multiplier is 0.40 → channel is 40% as valuable as attribution suggests

• Adjust budget allocation: apply incrementality multipliers to attribution-based budget recommendations for more accurate optimization

Step 10: Model retraining (for ML models)

• Retrain data-driven models quarterly (B2C) or semi-annually (B2B)

• Retrain immediately if: new channel launches, sales cycle shifts >20%, major seasonality event, conversion rate changes >30%

• Validate retrained model on holdout time period before deploying: train on months 1–9, test on month 10, deploy only if month 10 predictions are accurate

Cross-Channel Attribution in 2026: Trends and Predictions

Cross-channel attribution in 2026 emphasizes AI-driven data models, privacy-compliant tracking, and LTV integration. Brands using custom algorithmic attribution and incrementality testing achieve 35–50% better ROAS than those relying on platform-native last-click attribution. Key innovations include machine learning for probabilistic attribution, server-side tracking resilient to iOS ATT, and emerging blockchain solutions for consent management.

Shift to Multi-Touch and Data-Driven Models

Last-click attribution is obsolete in 2026 due to cookie deprecation, walled garden fragmentation, and AI-powered discovery channels where customers research extensively before converting. Google Analytics 4's 2026 beta features improve attribution accuracy by 25–40% through enhanced CRM integration and conversion-level attribution settings.

For B2B SaaS, account-based attribution tracking buying committees (averaging 6.8 decision-makers) across multiple stakeholder journeys is now standard. Companies implementing account-level attribution achieve 81% higher ROI in Account-Based Marketing campaigns compared to individual-level attribution.

Privacy-First Attribution Strategies

First-party and zero-party data strategies dominate 2026 attribution. Consent tracking, email-based identity resolution, and incentivized data sharing ("share your email for 10% discount") replace third-party cookies. Server-side tracking platforms like Northbeam and SegmentStream gain market share by resisting iOS ATT restrictions that cripple pixel-based competitors.

Conversion modeling using machine learning estimates unobserved conversions based on partial journey data, recovering 30–40% of touchpoints lost to privacy restrictions. However, this introduces estimation error that must be quantified—best practice is to report conversion ranges ("estimated 450–520 conversions") rather than false precision.

LTV and Lifecycle Attribution

Attribution extends beyond first purchase to retention, referrals, and cross-sells in 2026. Cohort-based LTV forecasting attributes long-term customer value to acquisition channels, revealing that channels with higher initial CAC (e.g., content marketing, influencer partnerships) often deliver 2–3× higher LTV than low-CAC channels (e.g., branded search).

This shifts budget allocation from "lowest cost per conversion" to "highest LTV per dollar spent"—a fundamental reframe that requires integrating CRM revenue data with marketing attribution platforms.

Real-Time Attribution and Optimization

Daily attribution dashboards enable real-time ROAS reallocation, creative-level analysis, and audience expansion decisions. The shift from monthly reporting cycles to daily optimization loops compresses testing velocity—brands run 3–5× more experiments per quarter than in 2024.

However, real-time data introduces noise. Best practice is to use real-time dashboards for anomaly detection ("CPA spiked 40% today—investigate") while maintaining 7–14 day evaluation windows for optimization decisions to filter out daily volatility.

B2B-Specific Attribution Advances

Dark funnel tracking (peer recommendations, private Slack communities, analyst reports) becomes measurable through proxy metrics and survey attribution. New customer surveys asking "How did you first hear about us?" provide quantitative estimates for word-of-mouth and dark social contributions that traditional attribution misses.

Intent data providers (Bombora, 6sense, Demandbase) integrate with attribution platforms to credit channels that drive account-level intent signals even when individual conversions haven't occurred yet—a leading indicator attribution that predicts pipeline before deals close.

Conclusion

Cross-channel attribution in 2026 requires balancing sophisticated measurement with acknowledgment of fundamental limitations. Privacy regulations, walled gardens, and cross-device complexity obscure 42–65% of customer journeys, making perfect attribution impossible. However, directionally accurate attribution—using the right model for your data volume, sales cycle, and business model—prevents the 26% budget waste caused by last-click over-reliance.

The key lessons:

• Model selection matters more than model sophistication. A simple position-based model with sufficient data outperforms a complex algorithmic model trained on inadequate volume. Use the decision tree framework to match model to data characteristics.

• Attribution measures correlation, not causation. Supplement with incrementality testing annually for channels receiving >15% of budget. A channel receiving 50% attribution credit but only 20% incremental lift is systematically over-credited.

• Tool selection depends on use case. B2B teams need LeadsRX or Ruler Analytics for pipeline attribution. Ecommerce brands spending $500K+ monthly need Northbeam or SegmentStream for server-side tracking. Enterprises managing 20+ data sources need Improvado for automated integration and AI-driven insights. No single tool fits all scenarios.

• Implementation rigor prevents failure. 30-day parallel tracking validation, quarterly model reviews, and stakeholder education on model limitations separate successful attribution programs from expensive failures.

• Blend measurement approaches. User-level attribution + media mix modeling + incrementality testing provides triangulation that catches blind spots in any single method.

Start by auditing your current attribution: Can you track users across 80%+ of touchpoints? Do you have >1,000 conversions per month? Is your average conversion path >4 touchpoints? Use the diagnostic frameworks in this guide to choose your model, select your platform, and implement with validation rigor. Attribution done right shifts budgets from underperforming to high-ROI channels—delivering 35–50% ROAS improvements that justify the implementation investment within 6–12 months.

.png)

.jpeg)

.png)